How not to load test

Recently, SpectoLabs hosted a focus group in Amsterdam with some of the Netherlands top software test engineers. During the evening, one of the engineers told me a story. After some persuasion, he gave me permission to retell this story, with the caveat that it must be used to teach others how to avoid the unfortunate events that haunt him to this very day.

A story of misery and lost earnings

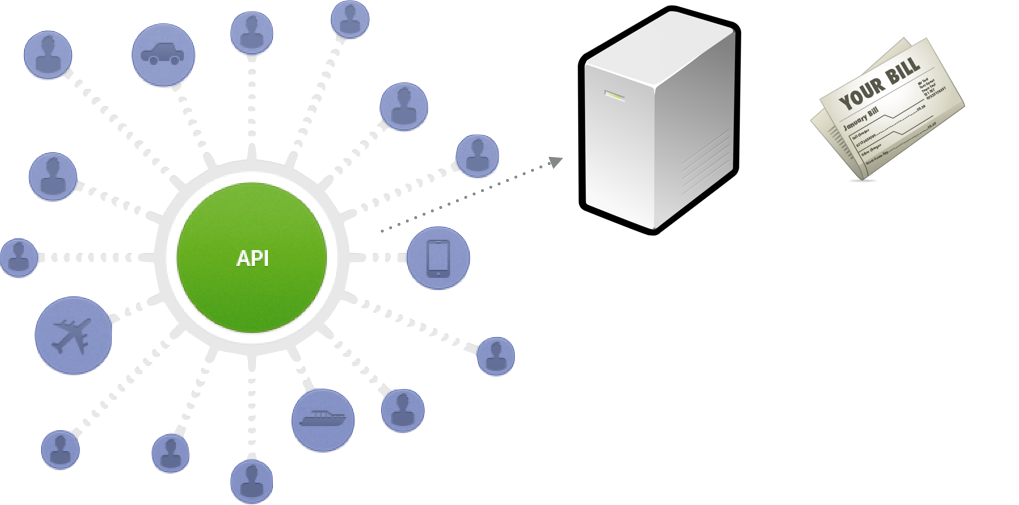

The story takes place at a company which we will call PublicAPI Corp. Coincidentally, their main product was a public API. Behind the API was an event-based billing system made up of microservices. As PublicAPI Corp billed their customers based on API usage, this system was fairly important. Both of these systems were considered to be “legacy”, and our engineer was the “the guy” everyone went to when these systems went down.

The engineer came in to work on a Monday morning and was greeted to a chaotic situation. PublicAPI Corp’s public API had gone down over the weekend. Not a big issue, as the 24⁄7 operations team knew how to restart the application. The real issue was that the billing system was not outputting any billable data - and hadn’t done so since the outage.

After some initial investigations, it appeared that the billing system was clogged up with unusable data. This was odd, as the system was not externally accessible, it rejected any event that wasn’t in a strict JSON structure, and it was well tested. The immediate solution was to manually flush all the data out of microservices and the queues.

Our engineer got to work, taking copies of the data for future analysis and flushing the system. Soon, a flock of managers turned up. They thanked him for his efforts and confirmed that the billing system was once again producing billable data. PublicAPI Corp was making money again.

Then the discussion turned to the fate of the billable data from the weekend. Because the billing systems’ queues had been blocked by unprocessable data, there was now a huge chunk of billing data missing. Our engineer was asked a barrage of questions. Where is that data? Can we get that data? Is there billable data somewhere in that dump of unprocessable data? Could they bill customers on an estimate of how much they were likely to have used the PublicAPI that weekend?

A disappointing breakthrough

Several days passed and the hunt for billable data expanded. Our engineer was now leading a small, cross-discipline task force. Operations people were going through any logs they could find, looking for anything suspicious. Developers were churning through gigabytes of data in the hope of finding clues. Account managers were liaising with customers - without making it too obvious that they were fishing for clues to help PublicAPI Corp recover lost revenue.

After a while, it became clear that a customer’s API usage had been abnormally high just before the outage. After a conversation with the customer, it was discovered that they had been running a load test against their application and, inadvertently, against PublicAPI Corp’s API. The company collectively puts their heads in their hands.

Two wrongs don’t make a right (they make a strong case for API simulation)

PublicAPI Corp had spent around 20 person-days investigating the situation, and had lost two days worth of earnings. Perhaps they were right to be annoyed with the customer - but they were also at fault. They didn’t have rate limiting on the public API, nor did they provide a reliable sandbox for their customers to test against. How else could their customers test their applications?

To me, this sounds like a common problem. People want to test their applications in isolation to remove any uncertainty from their test environment, but sometimes it isn’t easy. Especially when the application relies on third-party APIs.

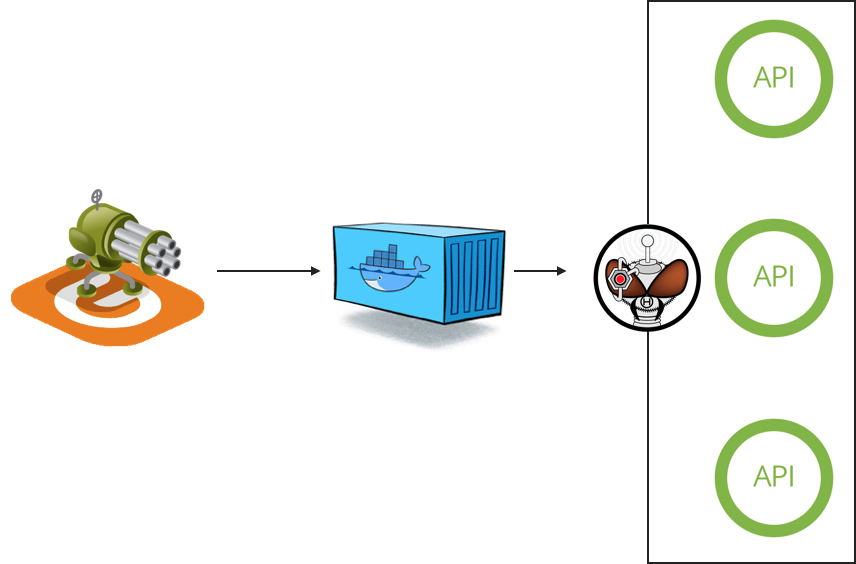

I talked to the engineer about how Hoverfly could have prevented this unfortunate situation from occurring. Hoverfly allows you to create simulations of APIs by “capturing” traffic - so PublicAPI Corp could have provided customers with a set of Hoverfly simulations to use - instead of the real API - for load testing. Or the customer could have used Hoverfly to create their own simulation by capturing real traffic, then used the simulation in a load test.

Maybe instead of providing API simulations, PublicAPI Corp could have provided a sandbox API instead.

But what if a customer wanted to test how their application behaves when PublicAPI Corp’s API misbehaves? You can’t tell a sandbox API not to respond to your request… But Hoverfly API simulations can be configured or extended (using middleware) to inject latency, random failure, malformed responses and all other kinds of unexpected behaviour into your test environment.

In conclusion

Public API vendors must expect their customers to want to load test their applications. Public API consumers should probably try to avoid inadvertently load testing the external APIs they depend on. Simulating APIs can save engineers days of pain and misery, avoid significant financial losses, and make the world a better place.

Hoverfly is free, open-source and available on GitHub. Questions, suggestions and contributions are very welcome: just raise an issue!